Google’s Tensor Processing Units (TPUs) represent a pinnacle of specialized hardware designed to accelerate machine learning workloads, particularly those involving neural networks. These application-specific integrated circuits (ASICs) are optimized for high-volume, low-precision computations essential in AI training and inference, offering superior performance and efficiency compared to general-purpose processors like CPUs and GPUs.

A Brief History of TPUs

The journey of TPUs began in 2015 when Google started using them internally for its data centers. Announced publicly at the Google I/O conference in May 2016, the first-generation TPU had already been operational for over a year, powering services like Google Search, Photos, and Translate. Notably, TPUs played a crucial role in landmark AI achievements, such as AlphaGo’s victory over Lee Sedol in 2016 and the development of AlphaZero for games like Chess and Go. By 2018, Google made TPUs available to third parties through its Cloud Platform, democratizing access to this powerful technology. The development involved collaboration with Broadcom for manufacturing, using foundries like TSMC. Over the years, TPUs have evolved through multiple generations, each building on the last to handle increasingly complex AI models.

The Architecture Behind TPUs

At their core, TPUs are built around a systolic array architecture, which excels in matrix multiplications—a fundamental operation in neural networks. This design allows for efficient data flow without the need for complex control logic, focusing on high throughput for low-precision operations like 8-bit integers or bfloat16 floating points. Unlike GPUs, which include hardware for graphics tasks, TPUs prioritize input/output efficiency and energy per operation, making them ideal for convolutional neural networks (CNNs). They are mounted in heatsink assemblies that fit into standard data center racks, often connected via high-speed interconnects for pod-scale computing.

Key components include on-chip memory for fast access, high-bandwidth memory (HBM) for larger datasets, and specialized units like SparseCore in newer models for handling sparse data in embeddings. The architecture supports TensorFlow natively, with extensions for frameworks like PyTorch via XLA.

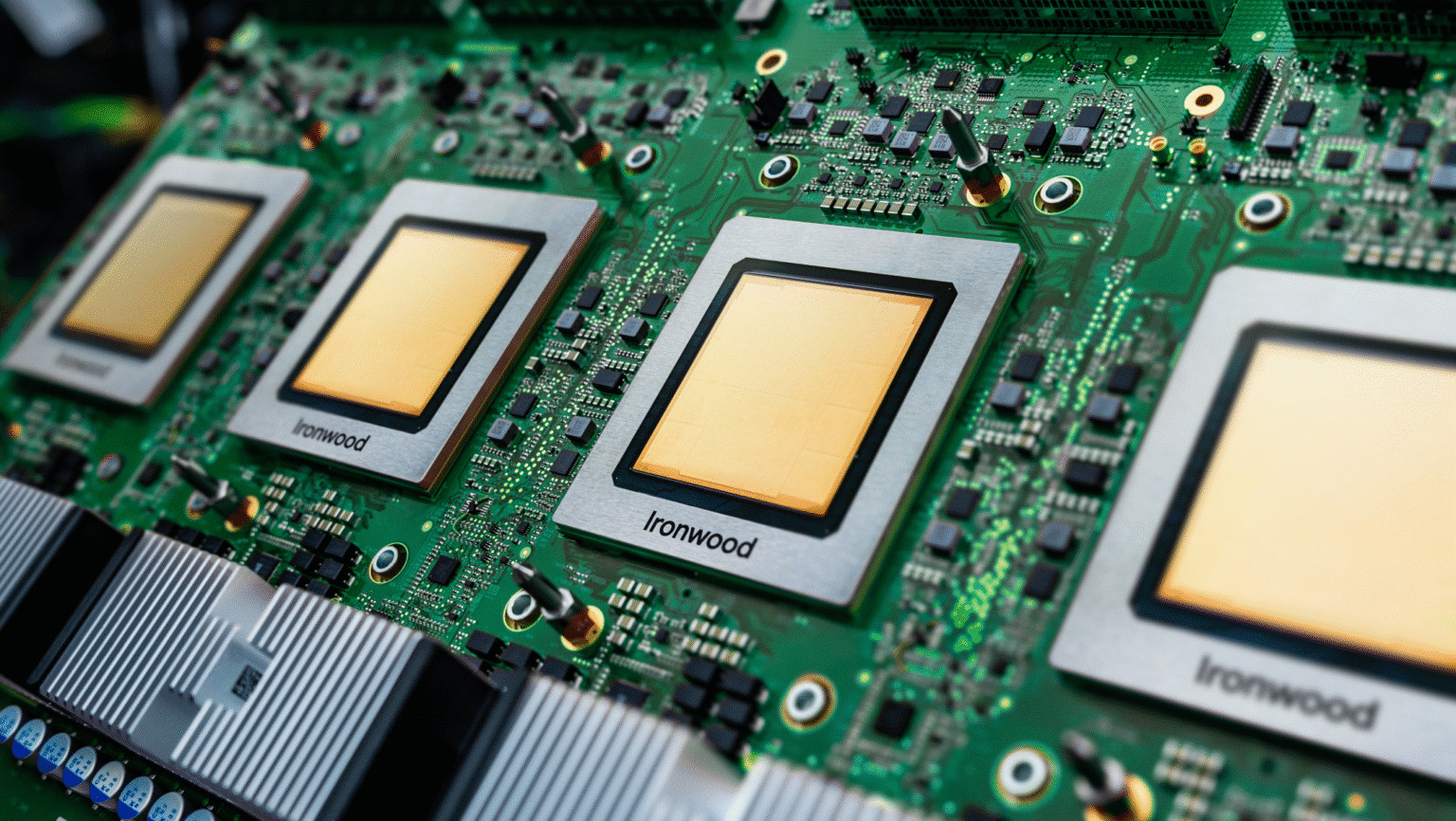

Generations of TPUs: From v1 to Ironwood

TPUs have seen rapid iteration, with each version improving performance, efficiency, and scalability.

| Generation | Year | Key Specs | Performance (TOPS) | Efficiency (TOPS/W) |

|---|---|---|---|---|

| v1 | 2015 | 28 nm, 700 MHz, 8 GiB DDR3 | 23 (int8) | 0.31 |

| v2 | 2017 | 16 nm, 700 MHz, 16 GiB HBM | 45 (bf16) | 0.16 |

| v3 | 2018 | 16 nm, 940 MHz, 32 GiB HBM | 123 (bf16) | 0.56 |

| v4 | 2021 | 7 nm, 1050 MHz, 32 GiB HBM | 275 (bf16) | 1.62 |

| v5e | 2023 | ~300 mm² die, 16 GiB HBM | 197 (bf16)/393 (int8) | N/A |

| v5p | 2023 | 95 GiB HBM | 459 (bf16)/918 (int8) | N/A |

| v6e (Trillium) | 2024 | 32 GiB HBM, 1750 MHz | 918 (bf16)/1836 (int8) | N/A |

| v7 (Ironwood) | 2025 | 192 GiB HBM, 7.37 TB/s | 4614 (fp8) | ~4.7 |

Data sourced from comprehensive summaries.

The latest, Ironwood (v7), introduced in April 2025, is optimized for inference in generative AI, offering 4,614 TFLOPs per chip and scalability to 9,216-chip pods delivering 42.5 Exaflops—over 24 times the power of the El Capitan supercomputer. It features 2x performance per watt over Trillium and enhanced SparseCore for ultra-large embeddings.

Benefits of Using TPUs

TPUs excel in cost-effectiveness and performance, with models like v5e providing up to 2.5x more throughput per dollar than predecessors. They enable effortless scaling through Google Kubernetes Engine (GKE) and support high-reliability AI workloads in secure data centers. Energy efficiency has improved dramatically, with Ironwood being nearly 30x more efficient than v2. For developers, open-source tools like MaxText simplify large model training.

Applications in the Real World

TPUs power a vast array of AI applications, from fine-tuning large language models (LLMs) on custom data to deploying generative AI in Vertex AI. They underpin Google’s services, processing over 100 million photos daily in Google Photos and enhancing search with RankBrain. Edge TPUs extend this to devices like Pixel phones for on-device ML, enabling features in cameras and assistants. In cloud environments, they’re used for MLOps pipelines, scientific simulations, and financial modeling with SparseCore.

The Future of TPUs

As AI shifts toward proactive inference and generative models, TPUs like Ironwood position Google at the forefront, integrating with the AI Hypercomputer for breakthroughs in models like Gemini and AlphaFold. With availability expanding in 2025, expect broader adoption in industries demanding massive-scale AI.

In conclusion, Google TPUs have transformed AI hardware, driving efficiency and innovation from data centers to edge devices. As technology advances, they continue to redefine what’s possible in machine learning.