In the rapidly evolving landscape of Artificial Intelligence, we are currently witnessing an “arms race” of scale. Every few months, a new Large Language Model (LLM) or Vision Transformer is released, boasting trillions of parameters and requiring massive supercomputers just to function. While these “Titan” models represent the pinnacle of human-engineered intelligence, they suffer from a critical flaw: they are too big, too slow, and too expensive for everyday use.

This creates a fundamental tension in AI development. How do we harness the profound reasoning capabilities of a trillion-parameter model while making it small enough to run on a smartphone, a drone, or a laptop?

The answer lies in a sophisticated technique known as Knowledge Distillation.

The Scaling Paradox: Why Bigger Isn’t Always Better

To understand distillation, we must first understand the problem of scale. In modern Deep Learning, “more” has traditionally meant “better.” By adding more layers and more neurons (parameters), models become better at capturing the nuances of language, the textures of images, and the complexities of logic.

However, this scaling comes with a heavy price tag:

- Inference Latency: A massive model takes a long time to process a single prompt. In applications like autonomous driving or real-time voice translation, a delay of even half a second can be catastrophic.

- Computational Cost: Running models like GPT-4 requires thousands of high-end GPUs (like the NVIDIA H100) running 24/7. The electricity and hardware costs are astronomical.

- Memory Constraints: Most consumer devices—smartphones, IoT sensors, and even standard laptops—do not have the VRAM required to load a massive model into memory.

If AI is to become truly ubiquitous—integrated into our glasses, our cars, and our appliances—we cannot rely on giant models alone. We need a way to “compress” intelligence. This is where Knowledge Distillation enters the frame.

What is Knowledge Distillation?

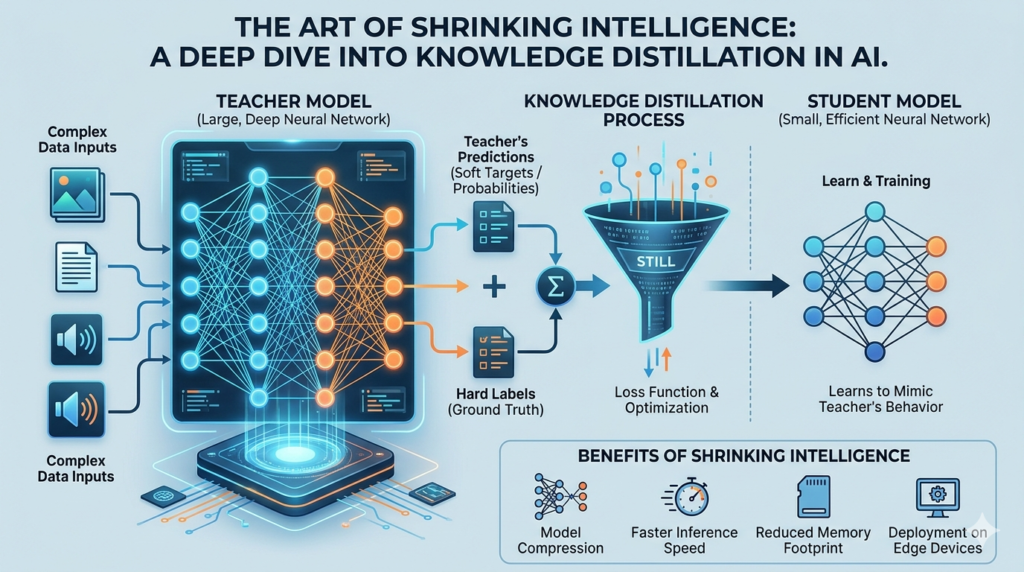

At its core, Knowledge Distillation is a machine learning technique where a small, compact model (the Student) is trained to reproduce the behavior and performance of a large, complex, pre-trained model (the Teacher).

The goal is not for the Student to learn the data from scratch, but rather to learn the logic and patterns that the Teacher has already mastered. If the Teacher is a world-class professor, the Student is an intensive student studying the professor’s summarized lecture notes rather than reading every single textbook in the library.

The Mechanics: Hard Targets vs. Soft Targets

To understand how this works technically, we have to look at how neural networks “learn.”

In standard supervised learning, a model is trained using “Hard Targets.” If you are training a model to recognize animals, and you show it a picture of a Golden Retriever, the label is binary: [Dog: 1, Cat: 0, Car: 0]. The model is penalized if it doesn’t guess “Dog.”

While effective, hard targets are “information-poor.” They tell the model what is right, but they don’t explain the relationships between things.

Knowledge Distillation utilizes “Soft Targets” (also known as “Dark Knowledge”). When a massive Teacher model looks at that same Golden Retriever, its output layer doesn’t just say “Dog.” It produces a probability distribution:

Dog: 0.90Cat: 0.08Car: 0.02

That 0.08 for “Cat” is incredibly important. It tells the Student model that even though this is a dog, it shares certain visual features with a cat (like fur or ears) that distinguish it from a car. This subtle nuance—the relative similarity between classes—is what Geoffrey Hinton and his colleagues termed “Dark Knowledge.”

By training the Student to match these “soft” probability distributions rather than just the “hard” labels, the Student learns the underlying structure of the data much more efficiently. It isn’t just memorizing answers; it is learning the reasoning of the Teacher.

The Three Pillars of the Distillation Process

The distillation process generally follows a structured workflow:

1. The Training Phase (Teacher Preparation)

Before distillation can begin, the Teacher model must be fully trained. This model is usually an ensemble of many networks or a singular, massive architecture that has been fine-tuned on a vast dataset. At this stage, we don’t care about speed; we only care about maximum accuracy.

2. The Mimicry Phase (The Distillation Loop)

The Student model is fed the same input data as the Teacher. However, the loss function (the mathematical way the model calculates its errors) is modified. Instead of just measuring the error against the ground truth, the loss function now measures two things:

- Distillation Loss: How much does the Student’s prediction deviate from the Teacher’s “soft” prediction?

- Student Loss: How much does the Student’s prediction deviate from the actual correct answer (the hard label)?

By balancing these two, the Student is forced to be both accurate to the truth and faithful to the Teacher’s nuanced logic.

3. The Deployment Phase (The Final Product)

Once training is complete, the Teacher model is discarded. We are left with a Student model that is significantly smaller in terms of parameter count but carries much of the “wisdom” of its predecessor. This Student is now ready for deployment on edge devices.

Real-World Applications and Success Stories

Knowledge Distillation isn’t just a theoretical concept; it is currently powering much of the AI we use daily.

1. Natural Language Processing (NLP)

The most famous success story is DistilBERT. BERT (Bidirectional Encoder Representations from Transformers) changed the world by allowing AI to understand context in language. However, BERT was heavy and slow. Researchers created DistilBERT using knowledge distillation. The result? A model that is 40% smaller and 60% faster while retaining roughly 97% of BERT’s original performance. This allowed high-quality NLP to run on mobile devices for search and autocomplete features.

2. Computer Vision

In medical imaging, doctors use AI to detect tumors in X-rays or MRIs. A massive model might be trained on a supercomputer to achieve near-perfect accuracy. Through distillation, that intelligence can be moved into a portable, handheld ultrasound device, allowing doctors in remote areas to benefit from high-level diagnostic AI without needing a server farm.

3. Autonomous Systems

Self-driving cars require split-second decision-making. A massive vision transformer might take too long to “think” about whether an object is a pedestrian or a shadow. Distilling that heavy vision model into a lightweight version ensures the car can react in real-time, saving lives through reduced latency.

The Challenges and Limitations

While distillation is powerful, it is not a magic wand. There are inherent trade-offs:

- The Performance Ceiling: A Student model can rarely, if ever, outperform its Teacher. It is essentially “inheriting” the Teacher’s biases and errors. If the Teacher has a blind spot, the Student will likely have that same blind spot.

- Complexity of Training: The distillation process itself is computationally expensive. You need to run both the Teacher and the Student simultaneously during training, which requires significant GPU resources.

- Architecture Constraints: It can be difficult to distill knowledge if the Student’s architecture is too fundamentally different from the Teacher’s. For example, distilling a Transformer into a simple Convolutional Neural Network (CNN) is much harder than distilling one Transformer into a smaller Transformer.

The Future: Beyond Simple Distillation

As we move toward Artificial General Intelligence (AGI), distillation will likely evolve in several exciting directions:

- Task-Specific Distillation: Instead of making a small version of a “general” model, we will distill models that are experts in very specific niches—like legal analysis, coding, or molecular biology—making them incredibly efficient for professional use.

- On-Device Continual Learning: Imagine a Student model on your phone that doesn’t just stay static but uses tiny bits of “distilled” knowledge from the cloud to learn your personal habits without ever sending your private data to a server.

- Multi-Teacher Distillation: Future AI might not learn from one “Professor,” but from an ensemble of several different Teachers, combining the strengths of various models into a single, hyper-efficient Student.

Conclusion

Knowledge Distillation represents a shift in the AI paradigm—from a focus on raw scale to a focus on optimized intelligence. It is the bridge between the massive, theoretical power of cloud-based supermodels and the practical, everyday utility of edge computing. By teaching smaller models how to “think” like the giants, we are ensuring that the future of AI is not just smart, but fast, accessible, and ubiquitous.